LARA: A Light and Anti-overfitting Retraining Approach for Unsupervised Time Series Anomaly Detection

May 16, 2024·

,

,

,

,

,

,

·

0 min read

,

,

,

,

,

,

·

0 min read

Feiyi Chen

Zhen Qin

Mengchu Zhou

Yingying Zhang

Shuiguang Deng

Lunting Fan

Guansong Pang

Qingsong Wen

Abstract

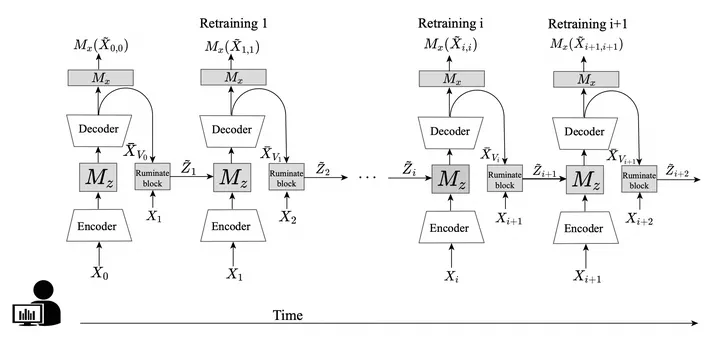

Most of current anomaly detection models assume that the normal pattern remains the same all the time. However, the normal patterns of web services can change dramatically and frequently over time. The model trained on old-distribution data becomes outdated and ineffective after such changes. Retraining the whole model whenever the pattern is changed is computationally expensive. Further, at the beginning of normal pattern changes, there is not enough observation data from the new distribution. Retraining a large neural network model with limited data is vulnerable to overfitting. Thus, we propose a Light Anti-overfitting Retraining Approach (LARA) based on deep variational auto-encoders for time series anomaly detection. In LARA we make the following three major contributions: 1) the retraining process is designed as a convex problem such that overfitting is prevented and the retraining process can converge fast; 2) a novel ruminate block is introduced, which can leverage the historical data without the need to store them; 3) we mathematically and experimentally prove that when fine-tuning the latent vector and reconstructed data, the linear formations can achieve the least adjusting errors between the ground truths and the fine-tuned ones. Moreover, we have performed many experiments to verify that retraining LARA with even a limited amount of data from new distribution can achieve competitive performance in comparison with the state-of-the-art anomaly detection models trained with sufficient data. Besides, we verify its light computational overhead.

Type

Publication

in The Web Conference